Vibe Coding Is Not Engineering, and the Risks Are Bigger Than You Think

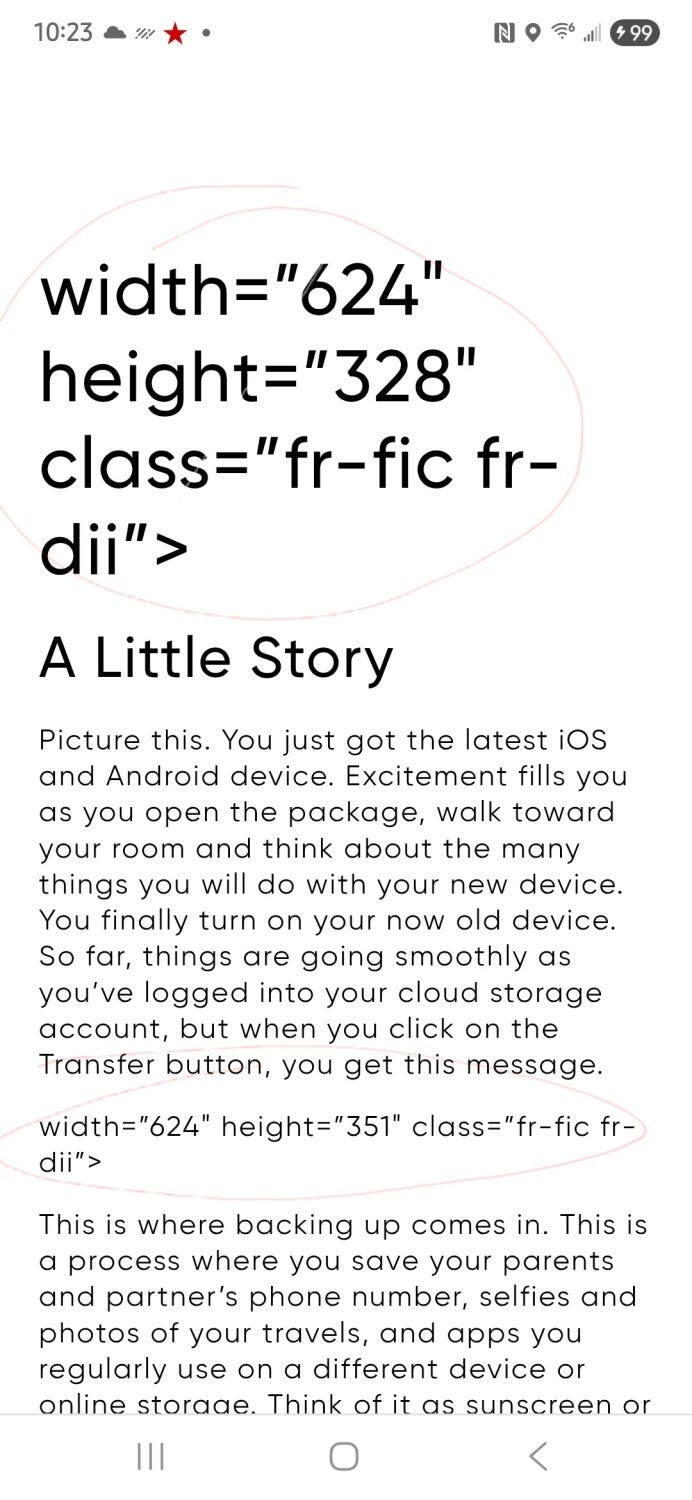

What you’re seeing isn’t supposed to be visible to users at all. Instead of a clean web experience, the page is leaking raw HTML attributes directly into the interface. I have seen several of these lately, and this is now alarming.

These are formatting instructions meant for the browser’s rendering engine. They belong inside markup layers or content management systems, not displayed directly in front of users. Yet here they are, sitting in the middle of a production page as if they were normal text.

To most people, this might look like a harmless formatting glitch. Maybe the page just loaded incorrectly. Maybe it’s just a small bug that slipped through.

But engineers see something very different. This is a signal that something in the system failed, likely somewhere between content generation, markup rendering, and final UI output. It means validation failed, or a formatting layer was skipped, or code that was meant to transform content never ran correctly.

And this is exactly the kind of issue that starts appearing when teams move faster than their verification processes can keep up.

A phrase that has quietly entered engineering culture over the last year is “vibe coding.” The idea is simple. Developers lean heavily on AI tools to generate code quickly, prompting their way through features and workflows. Instead of designing systems deliberately, they iterate rapidly with generated snippets until something appears to work. If the code runs, if the UI loads, if the feature appears functional, it gets shipped.

In early experimentation or prototypes, this approach can feel magical. Entire features appear in seconds. Integrations that once took hours can be scaffolded in minutes. Developers can move incredibly fast, and teams often feel like productivity has skyrocketed.

But speed can create an illusion of correctness. Software can look like it works while silently accumulating issues underneath the surface. A system may pass basic checks while still containing security gaps, unstable integrations, missing validation layers, or fragile logic that breaks under real user behavior.

This is the core danger of vibe coding: it prioritizes momentum over verification. And while that might work for prototypes, production systems, especially those operating at scale, cannot be built on vibes. When experienced engineers look at the screenshot above, they don’t just see stray HTML attributes. They see the chain of possible failures that allowed those attributes to surface.

Perhaps a CMS injected raw markup that the frontend failed to sanitize. Maybe a rendering function assumed a different content structure and skipped a transformation step. It could be that AI-generated code introduced a shortcut that worked locally but failed under production data. Or perhaps an escaping function behaved differently in staging versus production.

These issues are rarely obvious during quick testing. They show up when systems interact with real data, real user inputs, and real production infrastructure. That’s why experienced engineering teams spend significant time validating edge cases, testing integrations, and reviewing code paths that might break in unexpected ways.

In other words, engineers aren’t just writing code they are constantly asking “what could go wrong?” That mindset is what prevents small formatting errors from turning into larger system failures. Without that mindset, issues like the one in the screenshot become increasingly common.

Every engineering organization pays what many developers call the verification tax. This is the time, effort, and discipline required to ensure that software behaves correctly in real-world environments. Verification is rarely glamorous work. It includes validating inputs, handling unexpected API responses, writing tests, reviewing code, securing endpoints, and making sure every integration behaves as expected under load.

AI can dramatically accelerate code generation, but it does not eliminate the verification tax. In fact, it often increases it. Rapid code generation necessitates engineers to invest additional time in ensuring the generated logic operates safely within their system architecture.

Skipping verification doesn’t remove the cost; it only delays it. And when the verification tax arrives late, it arrives with interest. Bugs become harder to trace. Systems become fragile. Teams dedicate weeks to troubleshooting issues that early review cycles could have detected.

The most mature engineering organizations understand this tradeoff clearly. Speed is valuable, but reliability is essential. Verification is the price we pay for building software that users can trust.

Why Large Companies Cannot Afford This

For large organizations, the stakes of skipping verification are dramatically higher.

A startup might ship a small bug that causes a UI glitch or minor inconvenience. But when large companies ship unstable code, the consequences scale rapidly. A small integration failure could interrupt thousands of transactions. A poorly validated endpoint could expose sensitive customer data. A security oversight could lead to regulatory investigations or major compliance violations. This post was inspired by seeing a big, well-known restaurant chain have this issue.

This is why mature engineering organizations invest heavily in processes that may appear slow from the outside: architecture reviews, security audits, testing pipelines, reliability engineering teams, and formal code review processes. These mechanisms exist not to slow development but to ensure that systems behave predictably and safely at scale.

At large companies, shipping broken systems isn’t just inconvenient; it can cost millions.

Bad Actors Are Moving Fast Too

There is another reality that makes verification even more important today: attackers are also benefiting from AI. Malicious actors are increasingly using automated tools to generate scripts that probe systems for weaknesses. They scan applications for improperly validated inputs, exposed APIs, unsecured tokens, and authentication flaws. Many of these vulnerabilities exist precisely in places where systems were built quickly without careful validation.

Software unintentionally exposes attack surfaces when it skips verification. A missing security check, an unvalidated parameter, or a misconfigured endpoint can become the entry point for exploitation. In other words, while some teams are using AI to move faster in development, attackers are using the same tools to move faster in exploitation.

This makes engineering discipline more important than ever. Strong verification processes aren’t just about reliability; they are also about defense.

Engineers Matter More Than Ever

Despite all the excitement around AI-assisted development, one truth remains unchanged: the value of engineers has never been about typing code. The real value of engineering lies in designing systems that work reliably under unpredictable conditions. Engineers anticipate failure modes, secure infrastructure boundaries, and build architectures that can scale safely as systems grow.

AI can generate syntax, scaffold applications, and accelerate development workflows. But it cannot fully understand the broader system context in which software operates. It cannot anticipate every edge case, every security implication, or every integration nuance. That responsibility still belongs to engineers.

And as software becomes more deeply embedded in financial systems, healthcare platforms, and global infrastructure, the importance of that responsibility only grows.

The future of software development will not be defined by vibe coding. It will be defined by teams that combine AI speed with engineering rigor using powerful tools while maintaining the discipline required to build systems that the real world can depend on.